Artificial intelligence is no longer a future concept in academic publishing—it’s already embedded in how researchers write, edit, and submit manuscripts. But here’s the uncomfortable truth: AI output and journal standards are not aligned. Not even close.

While tools promise speed and clarity, journals demand precision, accountability, and ethical transparency. That mismatch is creating a growing risk for researchers—especially those relying too heavily on generative systems without understanding their limitations.

This article breaks down the gap between AI Output vs Journal Standards, why it exists, and what serious researchers must do to bridge it—without compromising integrity or acceptance chances.

The Rise of AI in Academic Writing

AI tools are now used for everything: drafting abstracts, rephrasing sentences, summarizing literature, and even generating entire manuscripts.

The appeal is obvious:

- Faster writing cycles

- Reduced language barriers

- Improved readability

- Rapid literature condensation

- Assistance for non-native English authors

But speed isn’t the metric journals care about.

Validity is.

Traceability is.

Accountability is.

According to the World Health Organization’s stance on digital health ethics, responsible use of AI must prioritize transparency and human oversight. Most AI-generated academic text fails that test by default.

This is where the problem begins.

AI systems are trained on vast datasets, but they don’t “understand” research in the way journals expect. They predict language patterns. They don’t validate truth. That distinction matters more than most researchers realize.

What Journals Actually Expect

Academic journals—whether high-impact medical journals or regional publications like Freeport Standard Journal or Milton Standard Journal Newspaper—operate on strict editorial frameworks.

Their expectations include:

- Originality (no duplication or disguised paraphrasing)

- Author accountability (clear intellectual ownership)

- Methodological accuracy

- Citation integrity

- Ethical compliance

- Reproducibility of findings

Even regional publications such as Freeport Journal Standard Obituaries or Freeport Standard Journal maintain editorial verification standards, just at a different scale.

The point is simple:

Journals are built on trust. AI output is built on probability.

That’s the gap.

Journals don’t just assess language—they assess intent, rigor, and credibility. AI doesn’t operate on those dimensions unless guided carefully.

AI Output vs Journal Standards: Where the Gap Exists

Let’s break this down clearly.

Key Differences Between AI Output and Journal Expectations

| Aspect | AI Output (Generative Systems) | Journal Standards |

| Source Transparency | Often unclear or missing | Mandatory and verifiable |

| Originality | Can mimic existing text patterns | Must be genuinely novel |

| Accountability | No authorship responsibility | Full author accountability |

| Accuracy | Probabilistic, not guaranteed | Evidence-based and precise |

| Ethical Compliance | Not inherently built-in | Strictly enforced |

| Citations | Sometimes fabricated or incorrect | Must be real and verifiable |

| Logical Depth | Surface-level coherence | Deep analytical reasoning |

| Consistency | Can vary across sections | Must be structurally aligned |

This table highlights the core issue:

AI produces language. Journals evaluate knowledge.

That distinction is where most submissions fail.

Why Is Controlling the Output of Generative AI Systems Important?

This isn’t just a technical concern—it’s an ethical one.

Uncontrolled AI output can:

- Introduce factual inaccuracies

- Generate fake references

- Oversimplify complex research findings

- Mask plagiarism under paraphrasing

- Misrepresent study results

The Committee on Publication Ethics (COPE) clearly states that authors are fully responsible for submitted content, regardless of the tools used.

So when researchers ask, “Why is controlling the output of generative AI systems important?”, the answer is direct:

Because you—not the AI—are accountable for every word published under your name.

Controlling output AI is not optional anymore. It’s a requirement for ethical publishing.

The Hidden Risks Researchers Ignore

Most researchers underestimate how detectable poor AI usage is.

Here’s what journals and reviewers are increasingly spotting:

1. Generic Language Patterns

AI-generated text often lacks discipline-specific nuance. It reads “clean” but shallow.

2. Citation Inconsistencies

Fake or mismatched references are a major red flag.

3. Over-Polished Sections

When one section is unnaturally refined compared to others, it signals external generation.

4. Lack of Critical Thinking

AI summarizes—but rarely critiques or interprets deeply.

5. Conceptual Drift

AI may subtly shift the meaning of arguments, especially in literature reviews.

According to research discussed on Wikipedia’s overview of AI in healthcare writing, AI systems still struggle with contextual reasoning—something peer reviewers prioritize.

How Journals Are Responding to AI-Generated Content

Editorial policies are evolving fast.

Many journals now:

- Require AI disclosure statements

- Use AI-detection tools

- Reject manuscripts with unverifiable citations

- Demand raw data transparency

- Enforce stricter peer-review scrutiny

Some publishers have taken a stricter stance. As reported by Nature, several journals explicitly ban listing AI tools as authors and require full human accountability.

In practical terms, this means:

- You can use AI—but you must disclose it

- You can refine language—but not outsource thinking

- You can automate drafts—but not responsibility

The direction is clear:

AI is allowed—but only under control.

Explore more about AI usage with Combining AI and Human Editing: Best Workflow for Researchers.

The Technical Problem: AI Doesn’t Understand Research Context

Here’s the deeper issue most blogs ignore.

AI doesn’t fail because it’s “bad.”

It fails because it’s context-blind.

Generative models:

- Don’t understand the study design

- Don’t evaluate statistical validity

- Don’t recognize field-specific nuance

- Don’t track argument consistency across sections

This leads to subtle but critical issues:

- Misaligned hypotheses

- Incorrect interpretation of results

- Weak discussion sections

- Inconsistent terminology

For journals, these aren’t minor flaws. They’re rejection triggers.

Case Scenarios: Where AI Output Breaks Down

To make this practical, here are common failure points:

Scenario 1: Literature Review

AI summarizes sources, but:

- Misses key debates

- Fails to compare studies

- Lacks critical evaluation

Result: Weak intellectual contribution.

Scenario 2: Methods Section

AI may:

- Generalize procedures

- Omit technical specifics

- Misrepresent methodology

Result: Lack of reproducibility.

Scenario 3: Results Interpretation

AI often:

- Overstates findings

- Ignores limitations

- Uses vague conclusions

Result: Reviewer skepticism.

Scenario 4: Citations

AI-generated citations may:

- Not exist

- Be mismatched

- Be outdated

Result: Immediate credibility loss.

Bridging the Gap: What Researchers Must Do

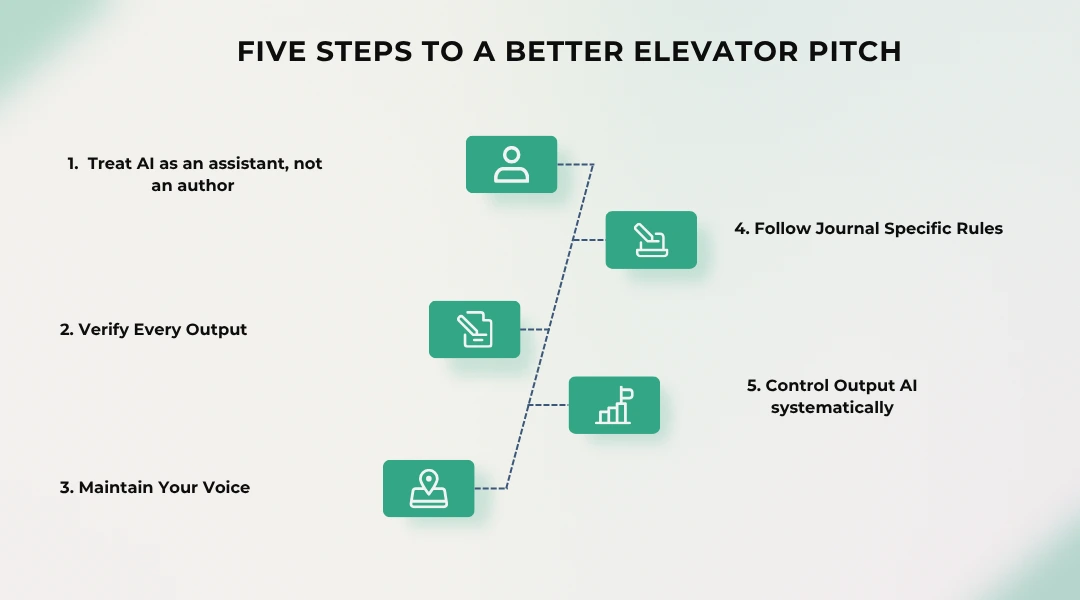

If you’re serious about publishing, you can’t just “use AI.” You need to manage it strategically.

1. Treat AI as an Assistant, Not an Author

Use it for:

- Language refinement

- Structural suggestions

- Clarity improvements

Not for:

- Data interpretation

- Results generation

- Literature conclusions

2. Verify Every Output

Cross-check:

- Facts

- Citations

- Terminology

Never assume correctness.

3. Maintain Your Voice

Journals validate the author's perspective. AI tends to neutralize it.

4. Follow Journal-Specific Policies

Each journal has different AI guidelines. Ignoring them is a direct rejection trigger.

5. Control Output AI Systematically

You must actively guide AI by:

- Using precise prompts

- Limiting output scope

- Iteratively refining results

- Validating each section

This directly answers the concern:

Why is controlling the output of generative AI important?

Because uncontrolled output leads to flawed manuscripts.

6. Invest in Human Editing

AI can clean language—but it cannot ensure journal compliance.

That’s where professional editing services matter. For example, many researchers rely on expert-level editing like that offered by Paperedit to align manuscripts with journal expectations before submission.

Structured editing approaches discussed in Paperedit blogs go beyond grammar guidelines—they help you refine argument quality.

Targeted services like Paperedit proofreading services ensure compliance with peer-review expectations rather than just readability.

You can also explore actionable strategies in Your Research Isn’t the Problem—Your Writing Is and understand journal rejection criteria with the guide: Journal Rejection Rate Explained (And How to Beat It).

Because at the end of the day:

Publishing is not about writing faster—it’s about meeting standards.

AI vs Human Editing: A Critical Comparison

| Feature | AI Tools | Professional Editing |

| Speed | Very fast | Moderate |

| Accuracy | Variable | High |

| Context Awareness | Limited | Expert-level |

| Ethical Compliance | Not guaranteed | Strictly ensured |

| Journal Alignment | Weak | Strong |

| Citation Verification | Inconsistent | Thorough |

| Acceptance Impact | Risky | Positive |

The takeaway is obvious:

AI accelerates writing. Human editing secures publication.

The Role of Academic Integrity in the AI Era

Academic integrity is not negotiable.

Using AI irresponsibly can lead to:

- Retractions

- Ethical violations

- Institutional penalties

- Loss of credibility

Organizations like COPE and WHO emphasize transparency and accountability for a reason.

AI doesn’t remove responsibility—it increases it.

The Future of AI in Academic Publishing

AI isn’t going away. It will become more sophisticated, more integrated, and harder to detect.

But journals will evolve too.

Expect:

- Stricter AI usage disclosures

- Advanced detection systems

- Greater emphasis on raw data transparency

- Increased scrutiny of writing patterns

- Stronger reviewer training on AI detection

The gap between AI Output vs Journal Standards will shrink—but only for those who adapt responsibly.

Strategic Insight: What Smart Researchers Are Doing Differently

Top-performing researchers are not rejecting AI—they’re controlling it.

They:

- Use AI for early drafts only

- Rewrite critical sections manually

- Validate every citation

- Use professional editing before submission

- Align strictly with journal guidelines

They don’t treat AI as a shortcut.

Instead, they treat it as a controlled tool within a larger workflow.

That’s the difference between rejection and acceptance.

Final Takeaway

Here’s the reality most researchers don’t want to hear:

AI can help you write.

But it cannot make your work publishable on its own.

The gap exists because:

- AI prioritizes fluency

- Journals prioritize integrity

Bridging that gap requires:

- Awareness

- Control

- Human expertise

Ignore it, and your manuscript risks rejection.

Understand it, and you gain a serious competitive edge.

References